How to run Deepseek-R1 safely on Azure Foundry

Keep your data safe and run models on Azure

With all the hype around Deepseek, everyone wants to try out the model for themselves and see if its worth the hype. Whether its to test it in their use case, or start building with it or just try it out for fun.

When you sign up for Deepseek on their website, they clearly state that all data shall be collected and will pretty much be used by the CCCP how they see fit. This is not really ideal as you dont want to be giving your data away.

Microsoft just announced that Deepseek-R1 is now available on Azure as one of the models and I will show you how you can quickly set this up. Additionally I will show you how to configure it on OpenWebUI which provides a good UI experience of using the model.

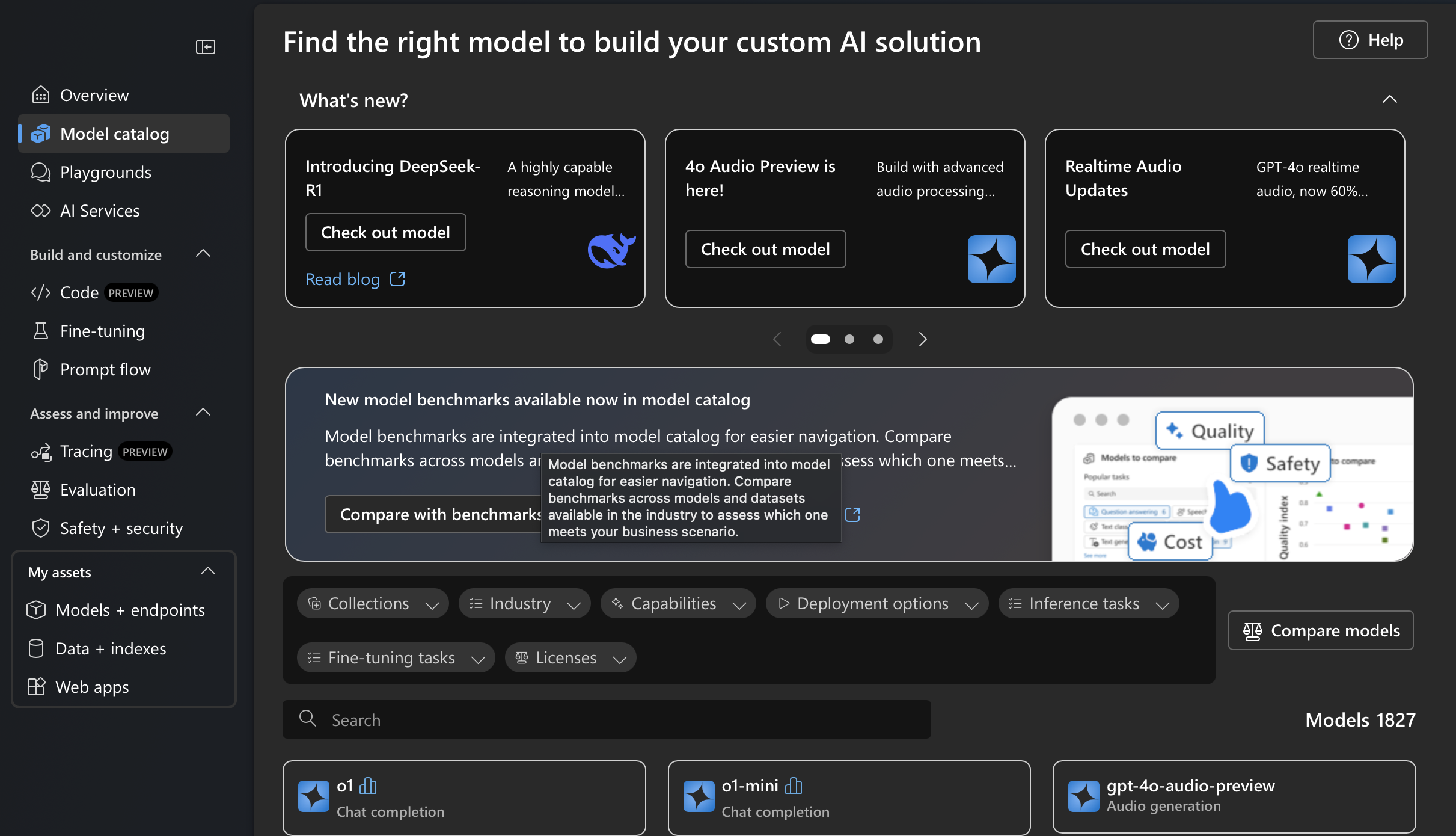

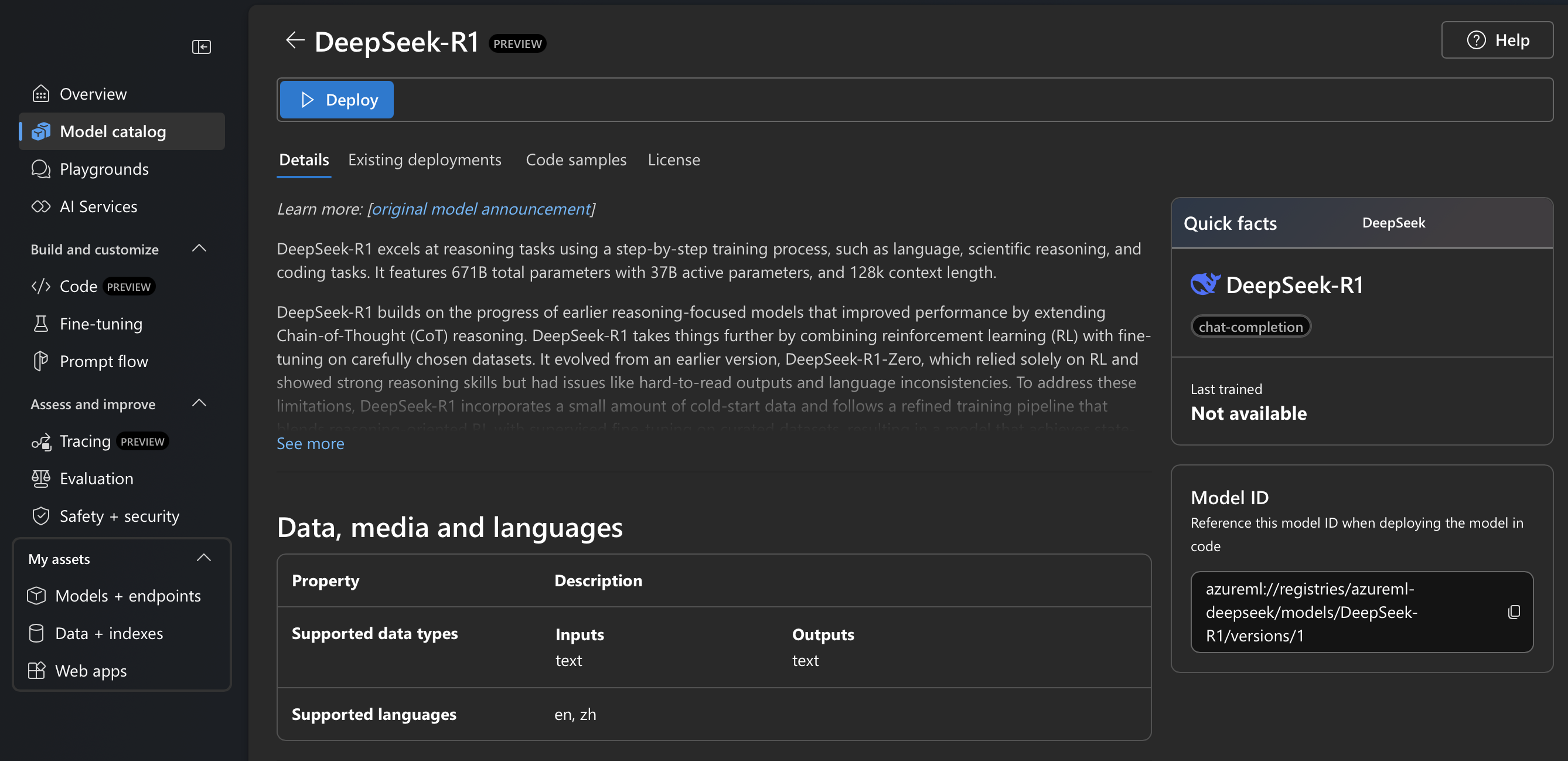

Navigate to Azure Foundry at the link below and navigate to the model catalog and select Deepseek-R1

[Azure Foundry](https://ai.azure.com )Once selected you can proceed to deploy the model by clicking the deploy button.

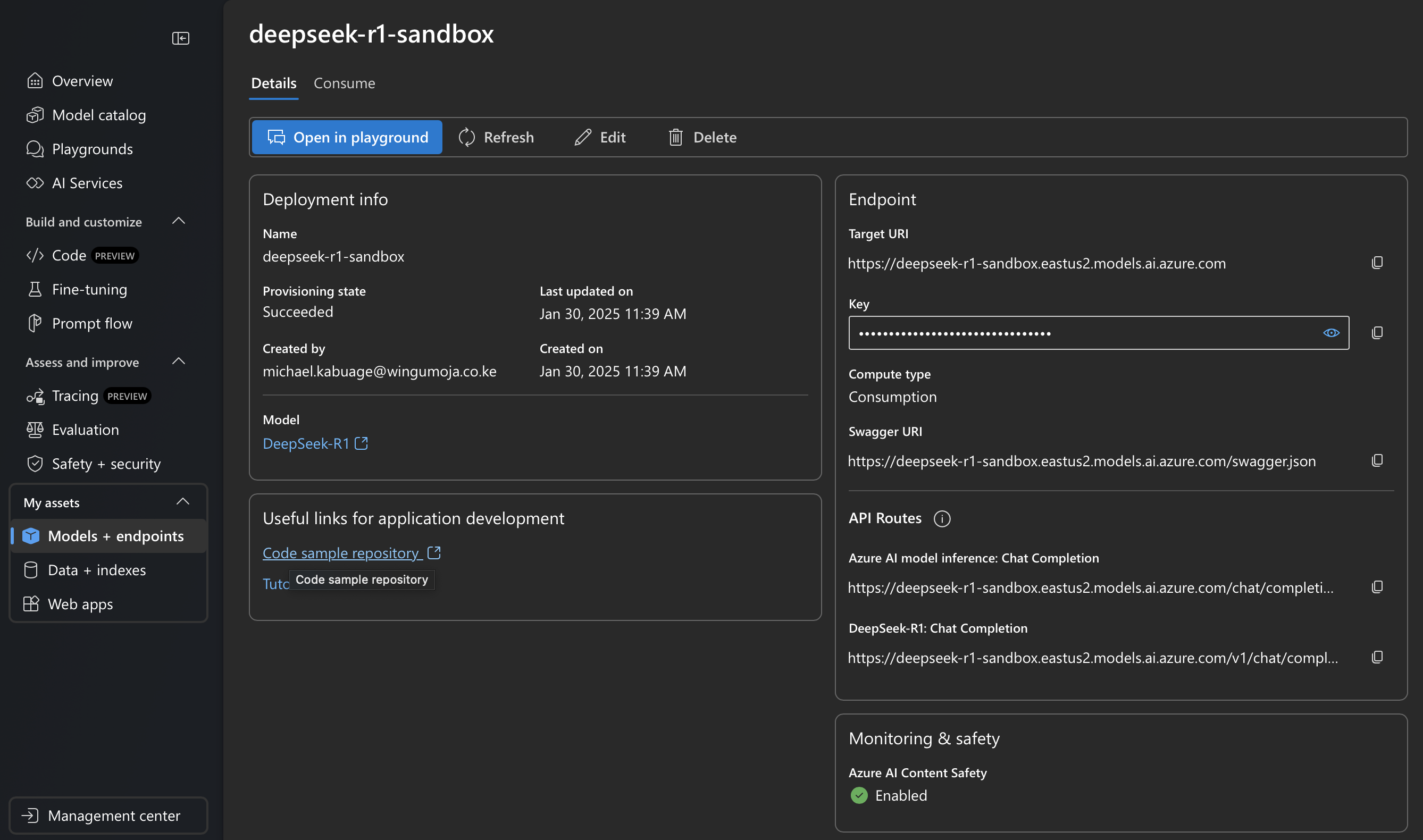

Once deployed, you can navigate to the available models you have and click on the new model you deployed to view the details of the model.

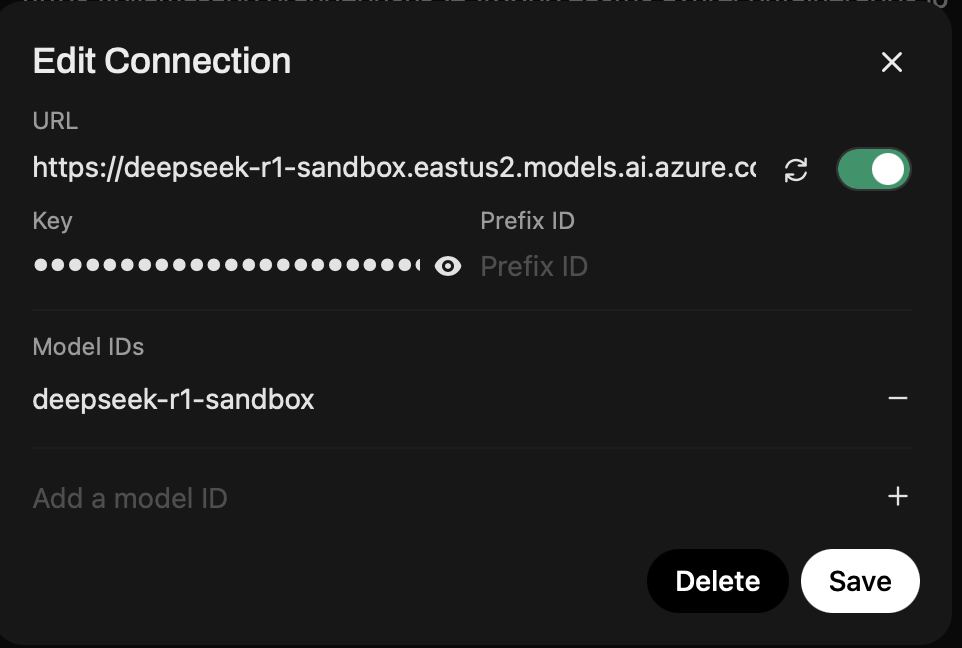

Once you have your model deployed, you can configure the endpoint url, key and model name in OpenWebUI as shown below:

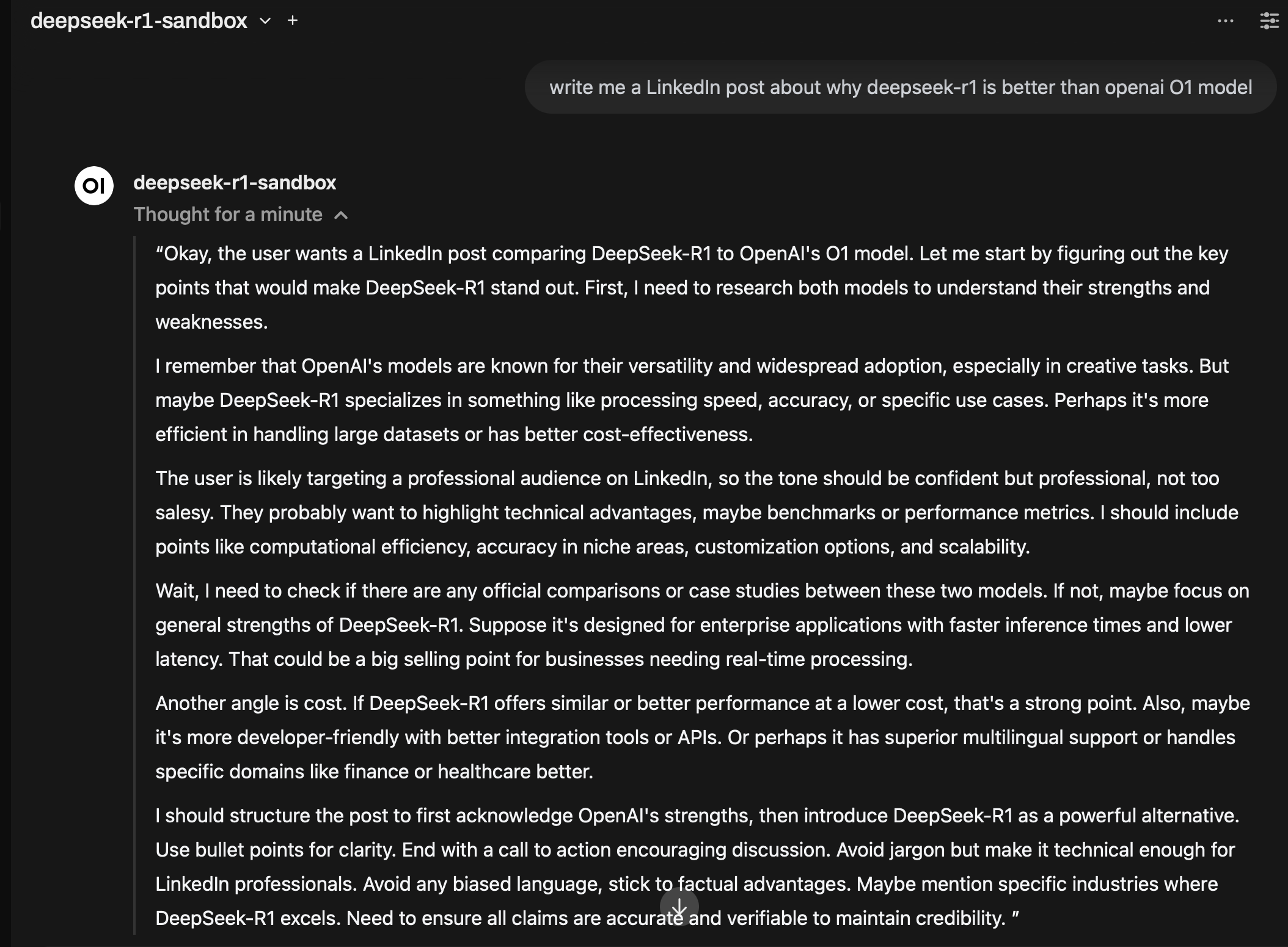

Once completed, you can start a new chat and select Deepseek-R1 and start testing.

Your data will reside only in your Azure tenant and will not be shared with anyone. If you are running OpenWebUI locally, then any saved messages will only reside on your local machine.

Alternatively you could host OpenWebUI on Azure and once again any information will only reside in your tenant.

Hopefully this helps in running a secure version of Deepseek-R1.